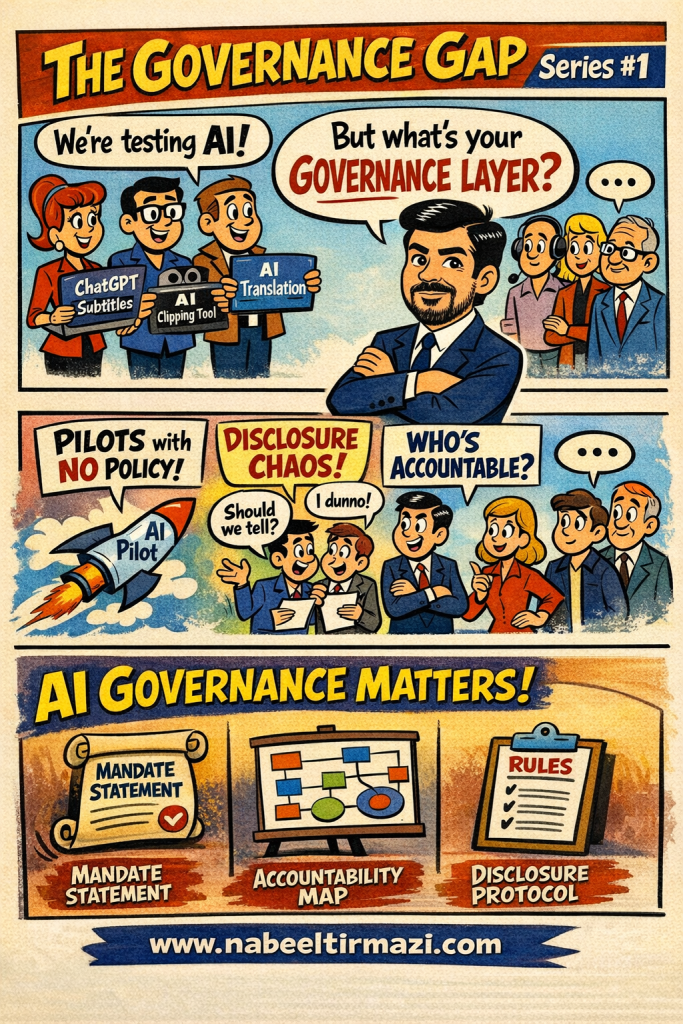

There is a scene playing out across broadcast houses and newsrooms in Asia-Pacific that has become almost predictable.

A team adopts an AI tool, a subtitle generator, a translation assistant, an automated clipping system. Results look promising. Productivity goes up. Leadership is cautiously impressed.

Then someone in a meeting asks the question nobody prepared for:

“Do we have a governance layer for this?”

Silence.

That silence is not a technology problem. It is a structure problem.

Piloting Without Policy

The speed of AI adoption has outpaced the speed of institutional thinking. Teams are running powerful tools before their organizations have defined what those tools are allowed to do, who is responsible when they go wrong, and whether audiences or stakeholders even know they are being used.

This is not a failure of enthusiasm. It is a failure of structure.

When an organization launches an AI pilot without a policy framework in place, it creates three cascading problems that compound over time.

First, there is no mandate. No documented statement of intent defining why the tool is being used, what problem it solves, and what ethical limits govern its use. Without a mandate, every decision about the tool is improvised, which means different people are making different calls based on different assumptions, often without realizing it.

Second, there is no accountability map. No record of who approved the tool, who monitors its outputs, who flags concerns, and who makes the final call when the system produces a problematic result.

The “AI Champion” title exists in many organizations. What rarely exists is a written accountability structure that answers harder questions: who reviews outputs before they reach the public? Who receives a complaint? Who has authority to suspend a tool mid-deployment?

Third, there is no disclosure protocol. No agreed position on whether to tell employees, audiences, or partners that AI was involved in a given output.

Most organizations treat this as a communications issue to handle later. It is not. It is an ethical and legal question that needs to be answered before the tool goes live.

The Disclosure Problem Is More Urgent Than Most Teams Think

In media contexts specifically, the stakes around disclosure are high and getting higher.

A broadcaster using AI-generated subtitles without disclosure is making an editorial choice about transparency, whether they intend to or not.

A newsroom using an AI tool to draft summaries without telling its audience has crossed a line that regulators, press freedom bodies, and audiences themselves are beginning to scrutinize more closely across the region.

The question is not whether to disclose. The question is how to disclose clearly, consistently, and in a way that builds institutional trust rather than eroding it.

The Minimum Viable Governance Structure

The solution is not to stop using AI. The solution is to build the governance layer before, or at minimum alongside, the pilot.

Three elements form the minimum viable governance structure for any organization beginning this journey.

A Mandate Statement documents why the organization is adopting AI, which values guide that adoption, and what the explicit limits are. This is a leadership commitment, not a technical document. It belongs at board or executive level, not in the IT department.

An Accountability Map assigns specific governance responsibilities to named roles at every stage of the AI lifecycle, from procurement and testing through deployment and ongoing review.

This is not bureaucracy for its own sake. It is the organizational equivalent of an evacuation plan. You hope you never need it urgently. You must have it in place before the emergency, not because of it.

A Disclosure Protocol establishes the organization’s standard position on transparency: when AI involvement is disclosed, to whom, in what format, and how that disclosure is worded consistently across the organization.

Governance Is Not the Slow Lane

Organizations that build governance into their AI adoption now will be the ones that earn and keep institutional trust as scrutiny of AI use increases across Asia-Pacific.

Governance is the foundation that makes speed sustainable, not the obstacle to it.

The question is not whether your organization will eventually need AI governance.

The question is whether you build it before or after your first governance crisis.