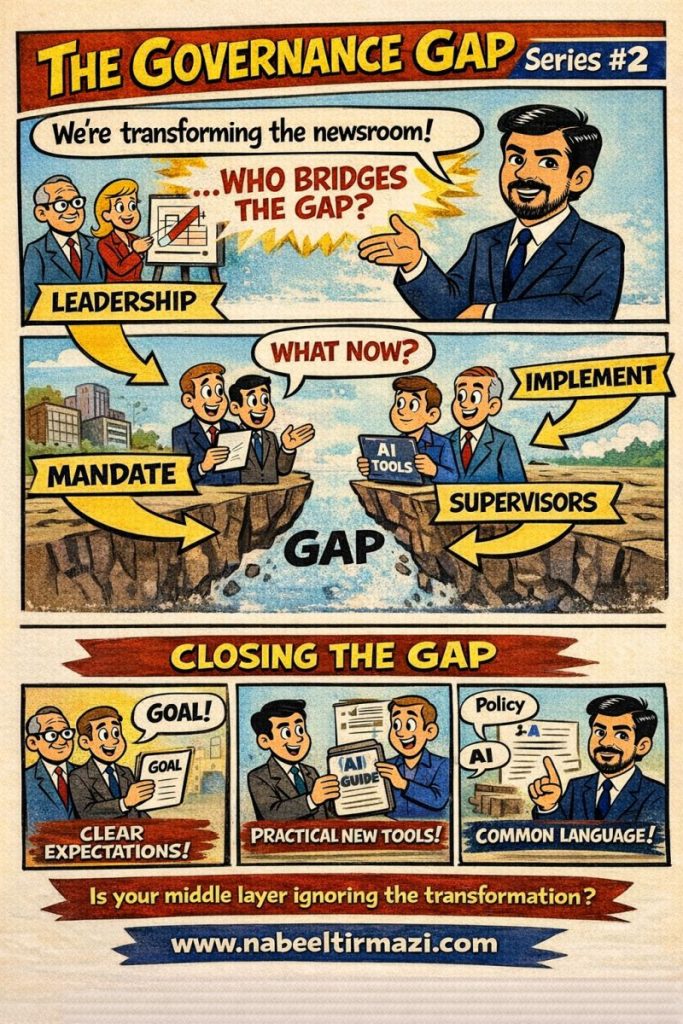

Leadership has made the announcement. The strategy deck has been presented. The slides say “AI-powered transformation.” The vision is clear at the top.

Walk down one floor into the editorial meeting, the production hub, the training room, or the operations office, and ask the supervisors what their role is in this transformation. Most will pause. Some will shrug. A few will say they are waiting for further instructions.

That pause is the governance gap.

The Middle Layer Problem

In most organizations undergoing AI adoption, the conversation happens at two levels simultaneously and rarely connects. At the leadership level, there is vision, strategy, mandate, and investment. At the implementation level, there are AI tools being tested, vendors being evaluated, and junior staff experimenting on their own initiative.

The middle layer sits between both. Team leads, section editors, floor supervisors, department heads, senior producers. These are the people who translate leadership decisions into daily behavior. They are also, in most AI transformation stories, the most neglected layer in the governance conversation.

Leadership gives the mandate. Implementers deploy the tools. Supervisors are left holding a piece of paper that says “transform” with no practical guidance on what that means for them, their teams, or their decisions starting Monday morning.

Why This Gap Is Dangerous

The middle-layer governance gap is not just an efficiency problem. It is a risk problem.

When supervisors do not understand the organization’s AI governance position, they cannot enforce it. When they have no common language to discuss AI with their teams, they cannot set expectations or address concerns. When they have received no practical tools, no guidance documents, no use-case frameworks, no escalation protocols, they manage AI adoption by instinct rather than by policy.

This produces inconsistency. One team in the same organization may be using AI tools openly and creatively. Another team, under a different supervisor, may be avoiding them entirely out of uncertainty. A third team may be using them in ways that contradict the organization’s editorial standards, ethical commitments, or legal obligations, simply because no one told the supervisor where the boundaries were.

Inconsistency is the first sign that governance has failed. It means the policy exists on paper but not in practice. And in a broadcast organization where editorial decisions carry public trust implications, that gap between paper and practice is not a minor operational issue.

Closing the Gap Requires Three Things

The solution is not more leadership announcements. The solution is structured investment in the middle layer, treating supervisors not as passive recipients of transformation but as active governance actors.

Three practical commitments make this concrete.

The first is clear expectations. Supervisors need to know exactly what their governance role is. This means documented guidance that tells them what decisions they are empowered to make about AI use in their teams, what they must escalate, what they must monitor, and how to handle staff questions. Expectations that are not written down do not exist in an organizational context.

The second is practical tools. A governance policy document is not enough. Supervisors need usable reference materials: a plain-language AI guide, a quick-reference checklist for evaluating AI tool use, a one-page summary of disclosure obligations, and a clear escalation path. These are the materials that make governance real on a daily basis rather than theoretical in a quarterly meeting.

The third is a common language. Leadership speaks in strategy terms: mandate, accountability, framework, policy. Implementation teams speak in technical terms: prompt, model, output, hallucination. Supervisors need a shared vocabulary that lets them communicate fluently in both directions. Building that common language is not a soft skill exercise. It is a structural governance requirement.

The Question Every Organization Should Ask

Is your middle layer being left out of the transformation conversation?

Not out of resistance. Not out of incompetence. But because no one has deliberately included them in the governance design, given them the language to participate, or equipped them with the tools to lead at their level.

AI governance does not travel automatically from a strategy document to the newsroom floor. It requires a deliberate bridge built from clear expectations, practical resources, and a shared language that connects vision to practice at every layer of the organization.

That bridge is not a technology problem. It is a leadership and learning design problem. And it is one that can be solved.