Before any AI tool goes live inside a broadcast organization, leadership should answer one simple question:

Has the organization made institutional decisions about how AI will be used?

In many cases, the honest answer is no.

AI adoption often starts quietly. A producer uses a tool to support research. A sub-editor tests it for draft scripts. A digital team experiments with captions, summaries, or translations. A production unit tries automated subtitles. Each decision feels small. Each use case may appear harmless.

The problem begins when these individual decisions accumulate without an institutional position behind them.

That is how governance gaps form.

Not because people are careless. Not because staff are trying to bypass policy. Most of the time, the organization simply has not created one clear place where editorial, legal, compliance, and production teams can agree on basic rules before the technology enters daily practice.

This is why every broadcast organization needs a focused half-day AI governance meeting before deployment.

It does not need to be a technical briefing. It does not need to be led by a vendor. It does not require a deep machine learning discussion.

It needs the right people in the room.

Editorial leadership. Legal. Compliance. Senior producers. Newsroom supervisors. Digital leads. Technical representatives where needed.

The purpose is to answer governance questions in writing before the first incident forces the organization to answer them under pressure.

Why Institutional Answers Matter

When AI is used in a newsroom, responsibility cannot sit only with the individual user.

If an AI-assisted summary distorts a public statement, if an automated subtitle misrepresents a speaker, or if a draft script includes an unchecked claim, the issue is not only that one staff member used a tool poorly.

The larger question is this:

What did the organization say about that tool, that use case, and that editorial category before it was used?

If there is no answer, the organization has a governance gap.

A producer may have used the tool. An editor may have approved the output. A technical team may have enabled access. But the broadcast organization carries the public-facing responsibility.

That is why AI governance must move from individual judgment to institutional clarity.

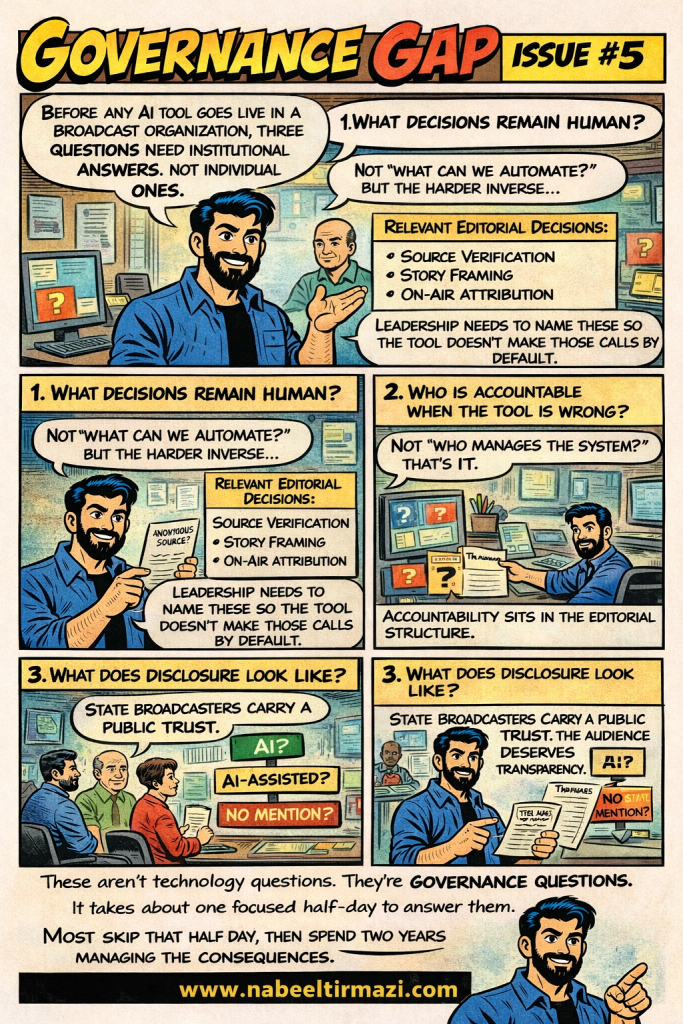

The half-day meeting should begin with three questions.

1. What Decisions Must Remain Human?

Most AI conversations begin with what can be automated.

Broadcast organizations need to begin somewhere else. They need to identify the decisions that must remain under human editorial authority.

Source verification is one of them.

A journalist’s judgment about whether a source is credible, whether a claim is independently confirmed, and whether a person’s identity should be protected cannot be treated as a routine machine task. These decisions carry legal, ethical, and public trust implications.

Story framing is another.

The choice of angle, context, language, prominence, and community representation shapes how audiences understand events. That choice should not be quietly transferred to an AI system through convenience or habit.

On-air attribution also needs rules.

Who gets credited? How is AI involvement described? When should audiences be told that AI supported the production process?

These categories need to be named clearly.

If leadership does not define where human judgment must remain, the newsroom will create its own informal practices. Those practices may vary from desk to desk, producer to producer, and programme to programme.

That inconsistency becomes a governance risk.

2. Who Is Accountable When the Tool Is Wrong?

A common mistake is to treat AI accountability as a technical issue.

If the tool fails, people often look toward IT or the system administrator. That may help identify a technical fault, but it does not answer the editorial question.

In broadcasting, accountability must sit inside the editorial structure.

If AI output reaches air, publication, or distribution, the accountability chain should be clear. The producer who used the output has responsibility. The editor who approved it has responsibility. The senior editorial leader who approved the policy environment also carries responsibility.

This does not remove the role of technical teams.

They still manage access, system performance, security, procurement, integration, and vendor relationships. Their role is real. But editorial accountability cannot be transferred to them simply because a machine was involved.

The organization needs an accountability map before deployment.

That map should answer basic questions:

Who can approve AI-assisted content?

Which use cases need senior review?

When must legal or compliance be involved?

Who carries responsibility if AI-supported content causes harm, misleads the audience, or creates reputational risk?

These answers should not be created after a crisis. They should be written before the tool enters the production pipeline.

3. What Does Disclosure Look Like?

Disclosure is one of the most sensitive questions in broadcast AI governance.

Public broadcasters, state media institutions, and public service media carry a specific kind of trust. Commercial broadcasters also face this pressure, especially when credibility is central to their brand.

Audiences deserve clarity about how content is produced, especially when AI has materially shaped what they see, hear, or read.

The organization needs to decide when AI involvement should be disclosed, how it should be worded, where it should appear, and which content categories require it.

Vague labels are not enough.

Saying “AI was used” does not tell the audience much.

“AI-assisted” is more useful, but only if the organization defines what it means.

For example, AI-assisted could mean the tool helped with transcription, translation, background research, headline suggestions, script drafting, visual generation, data analysis, or content summarization. These are different levels of involvement. They do not carry the same risk.

Saying nothing may also be a governance position in some limited contexts, but leadership should own that decision clearly. Silence should not become the default because nobody agreed on disclosure rules.

The Cost of Skipping the Meeting

One focused half-day can prevent months of confusion.

It will not replace a full AI governance framework. It will not solve every future risk. It will not remove the need for training, review, policy updates, and staff guidance.

But it gives the organization a baseline.

Without that baseline, AI adoption becomes fragmented. Different teams develop different habits. Supervisors make their own calls. Producers rely on personal judgment. Disclosure becomes inconsistent. Accountability becomes unclear.

That is exactly where governance gaps begin.

The cost of skipping the meeting is not theoretical. It shows up later through reactive policy writing, public corrections, internal confusion, editorial disputes, reputational damage, and loss of audience trust.

For broadcasters, AI is not only a tool question.

It is an editorial responsibility question.

Before any AI tool goes live, leadership should answer the basics on paper:

What decisions remain human?

Who is accountable when the tool is wrong?

What does disclosure look like?

These are not technology questions.

They are governance questions.

And they should be answered before the newsroom has to learn them through an avoidable mistake.