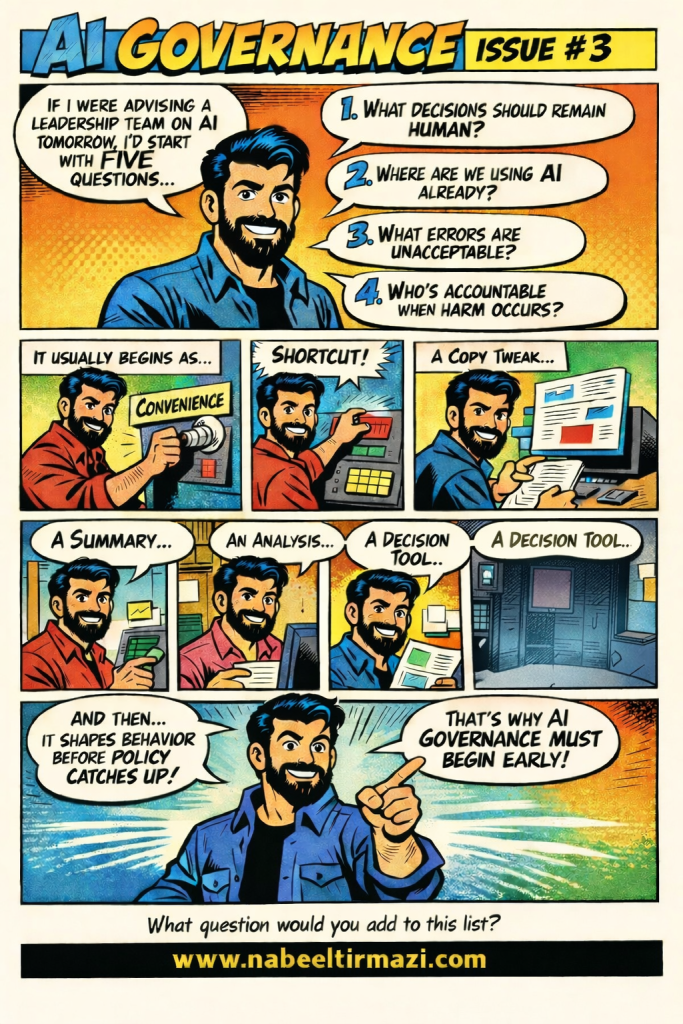

If I were walking into a leadership advisory session on AI tomorrow, I would not open with a framework. I would not begin with a policy template or a risk matrix. I would start with five questions, because the answers reveal everything about whether an organization is governing AI or simply tolerating it.

The Five Questions

1. What decisions should remain human?

This is the foundational question, and most leadership teams have never formally discussed it. In the absence of an explicit answer, the default is that AI can be used for anything that feels convenient. That is not a policy position. It is an abdication of one.

Every organization using AI needs a documented list of decision categories reserved for human judgment: editorial calls, personnel decisions, audience-facing communications, legal and ethical determinations. Without that list, AI will gradually expand into those spaces not because of a deliberate choice, but because no one drew the boundary.

2. Where are we already using AI?

In almost every organization I have worked with across Asia-Pacific, the honest answer to this question is: we are not entirely sure. AI adoption in most institutions is decentralized, informal, and faster than the reporting systems designed to track it.

Staff are using AI tools for drafts, translations, summaries, research, and scheduling, and in many cases their managers do not know. Leadership cannot govern what it cannot see. A structured AI use audit, a process for mapping current AI use across teams, is a governance prerequisite. Not an optional exercise.

3. What errors are unacceptable?

Every AI system produces errors. The question is not whether errors will occur. The question is which errors cross a threshold the organization cannot accept, legally, ethically, reputationally, or editorially.

A broadcaster using AI-assisted subtitles can tolerate a minor timing error. It cannot tolerate a factual mistranslation in a breaking news broadcast. A training organization using AI to generate assessment content can tolerate a clunky sentence. It cannot tolerate a discriminatory or factually false question reaching participants. Naming the unacceptable errors in advance creates the criteria for quality review, human oversight, and escalation protocols. It also forces a conversation that most teams are currently avoiding.

4. Who is accountable when harm occurs?

This question makes people uncomfortable, which is exactly why it must be asked in leadership settings rather than left to legal teams after the fact. AI-generated harm, whether misinformation, a privacy breach, discriminatory output, or reputational damage, will at some point affect organizations using AI at scale.

When that moment arrives, accountability cannot be assigned by pointing at the tool. It lives with the people who chose the tool, deployed it, reviewed its outputs, and set the policies governing its use. Leadership teams need to assign those accountability roles clearly and in writing before an incident occurs, not in response to one.

5. What is the fifth question you are not asking?

This one I leave open intentionally, because every organization’s governance gap is slightly different. For some it is about data privacy. For others it is about staff consent. For media organizations it is often about editorial independence and source protection. For public institutions it is frequently about equity and access. The fifth question is the one your specific context demands, and only your team can identify it.

Why Governance Must Begin Early

AI does not arrive in an organization as a governance challenge. It arrives as a convenience. Then a shortcut. Then a drafting tool. Then a summary assistant. Then a decision support system. At each stage the adoption feels incremental and manageable.

The cumulative effect is that AI begins shaping organizational behavior, workflows, habits, outputs, and standards, before any policy framework has been designed to guide it. By the time leadership recognizes the governance gap, the informal norms are already established and far harder to shift.

This is the core argument for early governance. Not that AI is dangerous, but that ungoverned AI adoption creates patterns of behavior that become progressively more difficult and costly to correct.

Start with the five questions. Build the governance layer around the answers. Do it before the convenience becomes the culture.