A common mistake is slowing down AI adoption in newsrooms across the world.

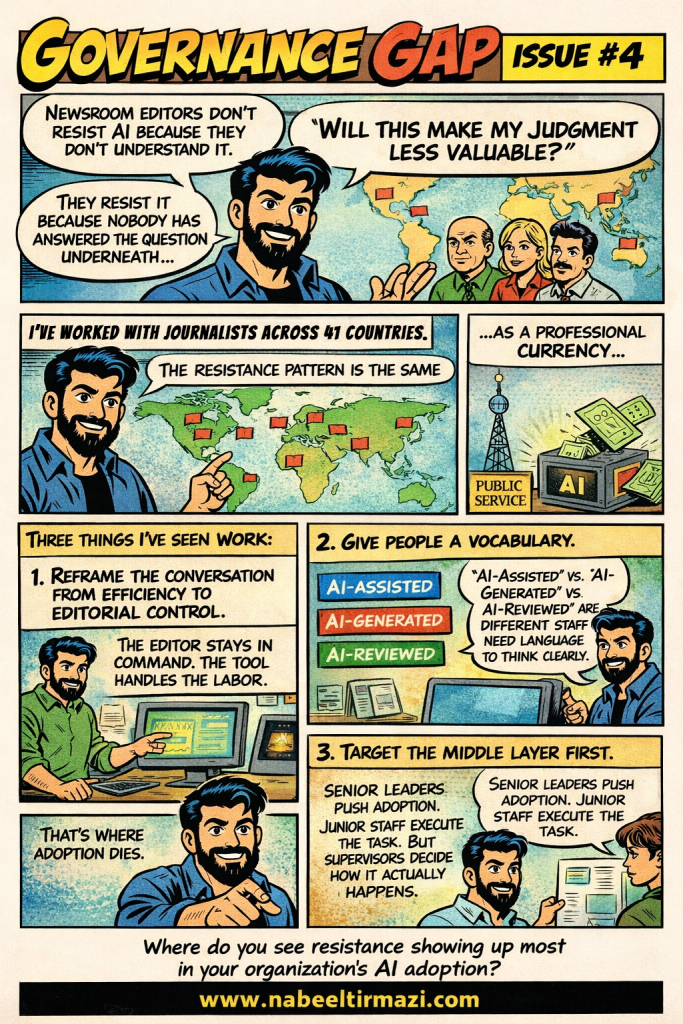

Leadership often assumes editors resist AI because they do not understand the technology. The usual response is more training, more tool demos, and more excitement from the top.

Still, the resistance remains.

The deeper issue is rarely technical. Many editors are asking a quieter question:

Will AI make my judgment less valuable?

Until that concern is addressed directly, tool demonstrations will have limited impact.

Experienced editors have spent years building professional credibility. Their value sits in their judgment: assessing a source, reading risk, understanding audience context, and making editorial calls under pressure.

That judgment is more than a skill. It is part of their professional identity.

So when AI enters the newsroom with promises of speed and efficiency, many editors hear something else. They hear a challenge to the value of the judgment they have spent decades developing.

This is the professional currency problem.

For newsroom leaders, this means AI adoption should be treated as a governance and change management issue. Technical training alone will not solve it.

The first shift is in framing.

If AI is introduced mainly as a productivity tool, experienced editors may feel positioned as the obstacle. The message can sound like: the newsroom needs to move faster, and AI will help reduce the friction.

A better frame is editorial control.

Editors need to see AI as a tool under their command. The machine can support research, summarization, transcription, translation, monitoring, and drafting. The editorial decision still belongs to the human professional.

That distinction matters. It changes how editors relate to the technology and how they explain its use to their teams.

The second issue is vocabulary.

Many newsrooms use the word “AI” too broadly. Staff may use the same label for very different editorial practices.

AI-assisted content, AI-generated content, and AI-reviewed content carry different levels of human involvement, risk, disclosure responsibility, and editorial accountability.

Without shared language, staff cannot classify their own work clearly. If they cannot classify it, they cannot govern it responsibly.

The third issue is the middle layer.

AI adoption often focuses on senior leadership and junior staff. Leaders set direction. Younger staff test tools quickly. The people who decide whether AI becomes part of daily newsroom practice are usually the section editors, senior producers, team leads, and department heads.

They shape informal newsroom norms. They answer practical questions. They decide when extra review is needed. They influence whether junior staff use AI carefully or casually.

When this layer is not equipped, adoption stalls. The policy may exist. The leadership message may be clear. Daily practice still follows the judgment of supervisors on the newsroom floor.

Editor resistance should be read as useful information.

It shows where governance has not yet landed. It shows where professional concerns have not been answered. It also shows where strong editorial values still exist.

The editors who push back hardest are often the ones most invested in quality, accuracy, and public trust. That resistance should be brought into the governance process, not dismissed as fear of technology.

The real question for media organizations is simple:

Where is AI resistance appearing in your newsroom, and what is it trying to tell you?